LLMChat: Deploying Your Self-Hosted AI Stack

You've built it, Now deploy it. From Cloudflared tunnels to production hardening, this post ties the entire self-hosted AI ecosystem together. Serving engines, chat interface, and code completion.

Hey, I'm Kukil. This is my journal - a running log of the things I'm building, breaking, and occasionally understanding.

If you like watching someone figure things out in real time, stick around. New posts whenever something interestingexplodes(literally or figuratively).

AI/ML

Robotics

Microcontrollers

Automation

3D Printing

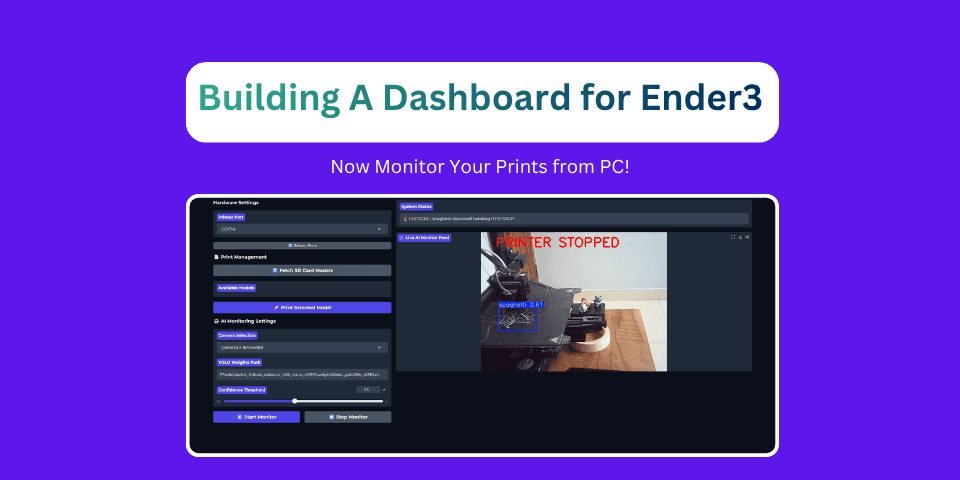

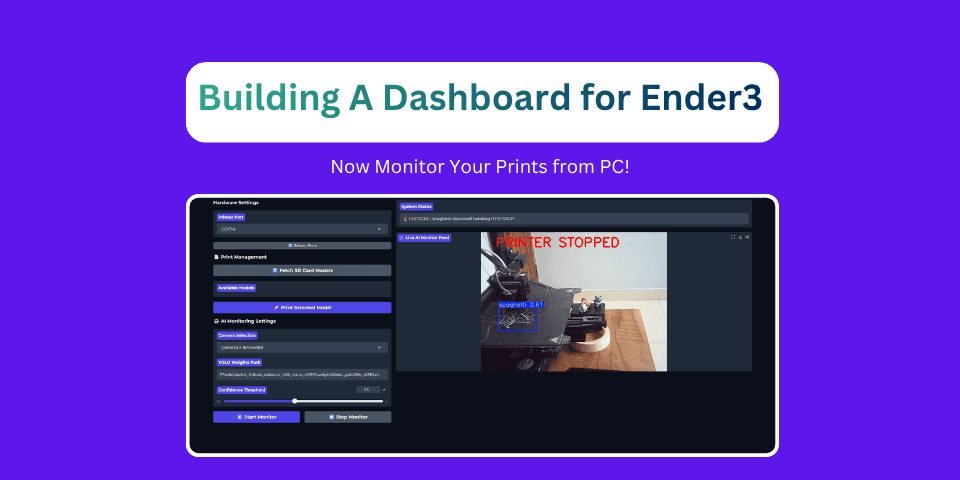

Ditch the SD card shuffle. Build a unified Gradio web dashboard that connects to your Ender 3 V2 Neo via USB, fetches G-code files from the printer's SD card, and runs real-time YOLOv26 print failure detection.

Explore my latest thoughts and tutorials

You've built it, Now deploy it. From Cloudflared tunnels to production hardening, this post ties the entire self-hosted AI ecosystem together. Serving engines, chat interface, and code completion.

What if your chat interface could run LLMs without any server at all? LLMChat supports in-browser inference via WebGPU and WASM using Transformers.js. No backend, no API calls, no data leaving your machine.

Text-only chat is limiting. LLMChat now auto-detects vision models, integrates Tavily web search, and gracefully falls back when things go wrong. Here's how it all works under the hood.

LLMs are smart, but they don't know your documents. I added a RAG pipeline to LLMChat — upload a PDF, chunk it, embed it, and chat with it. Zero external infrastructure.

New models drop on HuggingFace almost daily. LLMChat is a self-hosted ChatML interface — plug in any OpenAI-compatible endpoint, pick a model, and start chatting. No data leaves your infrastructure.

A comprehensive guide to common 3D printing failures. Learn how to identify, diagnose, and fix issues like spaghetti, stringing, zits, and warping.

Hi! I'm a passionate developer exploring the intersections of AI/ML, Robotics, Microcontrollers, and Automation.

Through this blog, I share my learnings, experiments, and best practices in these exciting fields.

Read More About Me

Hover over each icon to see the magic of technology come to life

Get notified when I publish new blog posts and tutorials